Strategy is the most

upstream form of design.

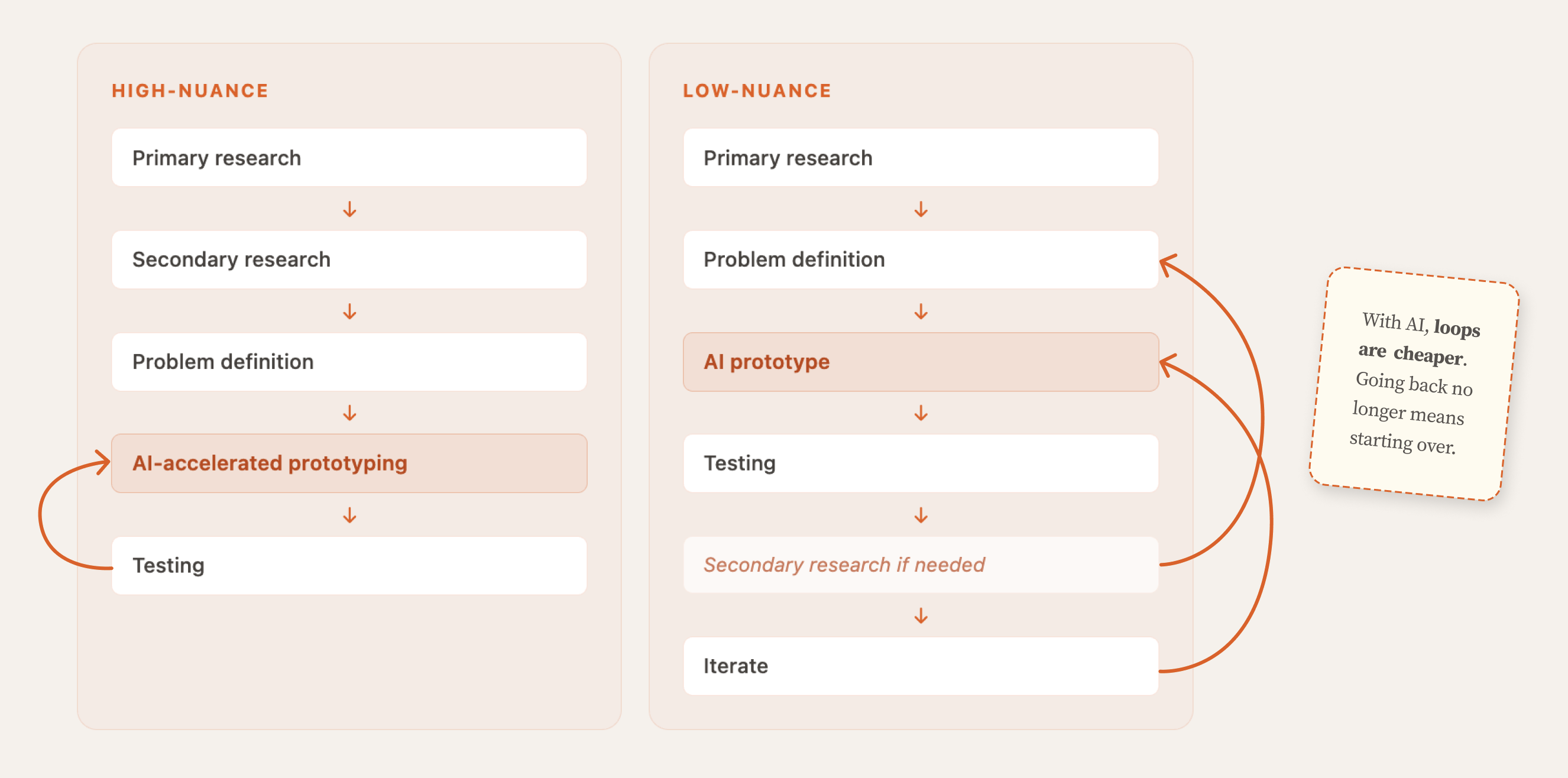

One of the biggest mindset shifts I got from Parsons was learning when to step back from the problem entirely.

Most people enter a business challenge at the subject level — optimizing a product, fixing a process, refining a feature. However, the first question I ask is whether we are looking at a symptom or at the system producing it. This is where strategic design separates from UX design; it is a judgment call driven by systemic thinking.

Much of this thinking was shaped by my professor Raz Godelnik, whose work on strategic design continues to influence how I approach complex problems.

Business Strategy

Two frameworks I use to read the strategic environment before any design work begins.

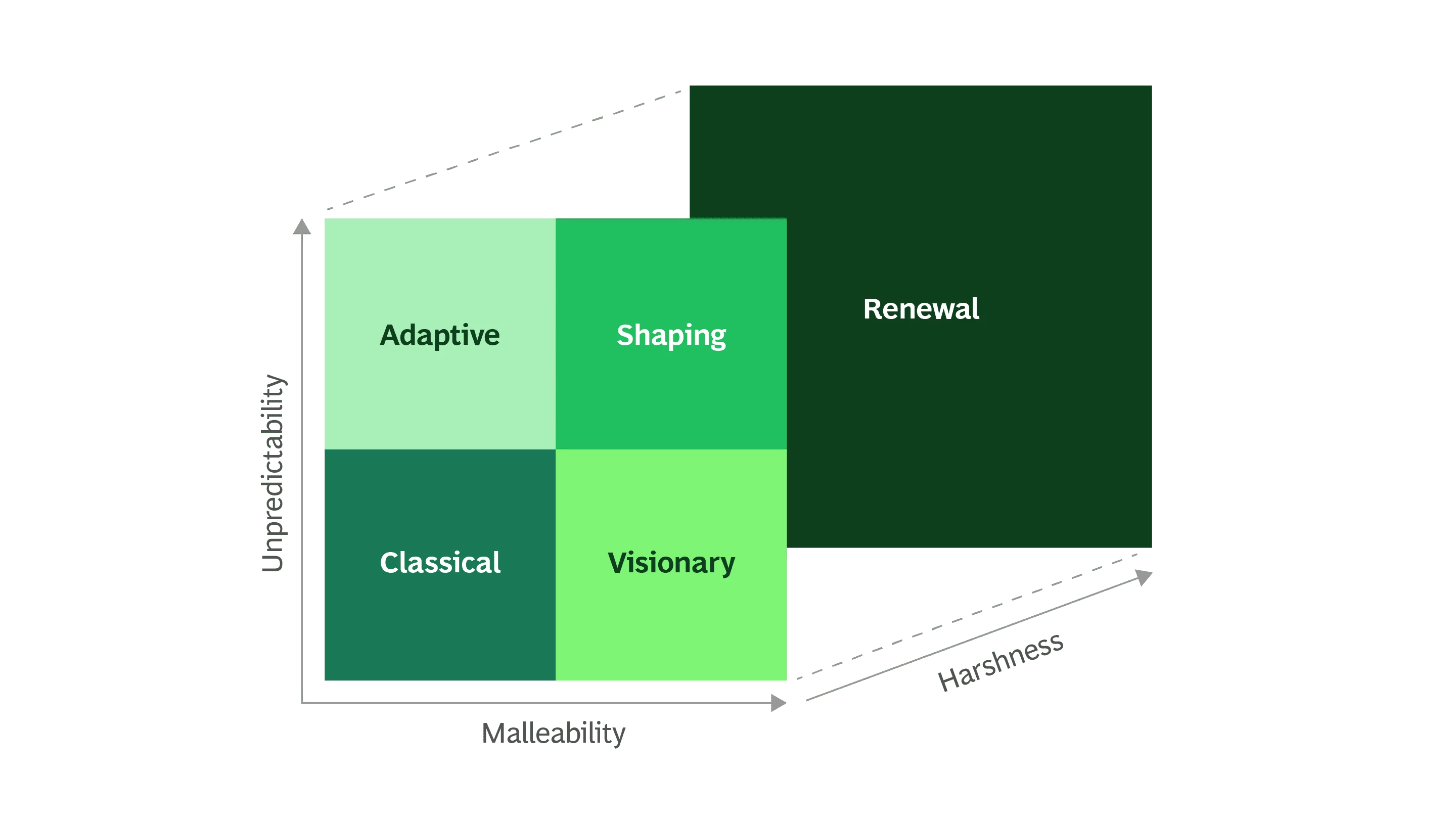

BCG Strategy Palette

Before I define a design direction, I need to know which strategic environment the business actually operates in — predictable, adaptive, harsh, or visionary — because each demands fundamentally different design logic.

BerylElitesX

The Palette revealed a mismatch: Adaptive environment, Classical design logic. That reframe reshaped the strategic brief.

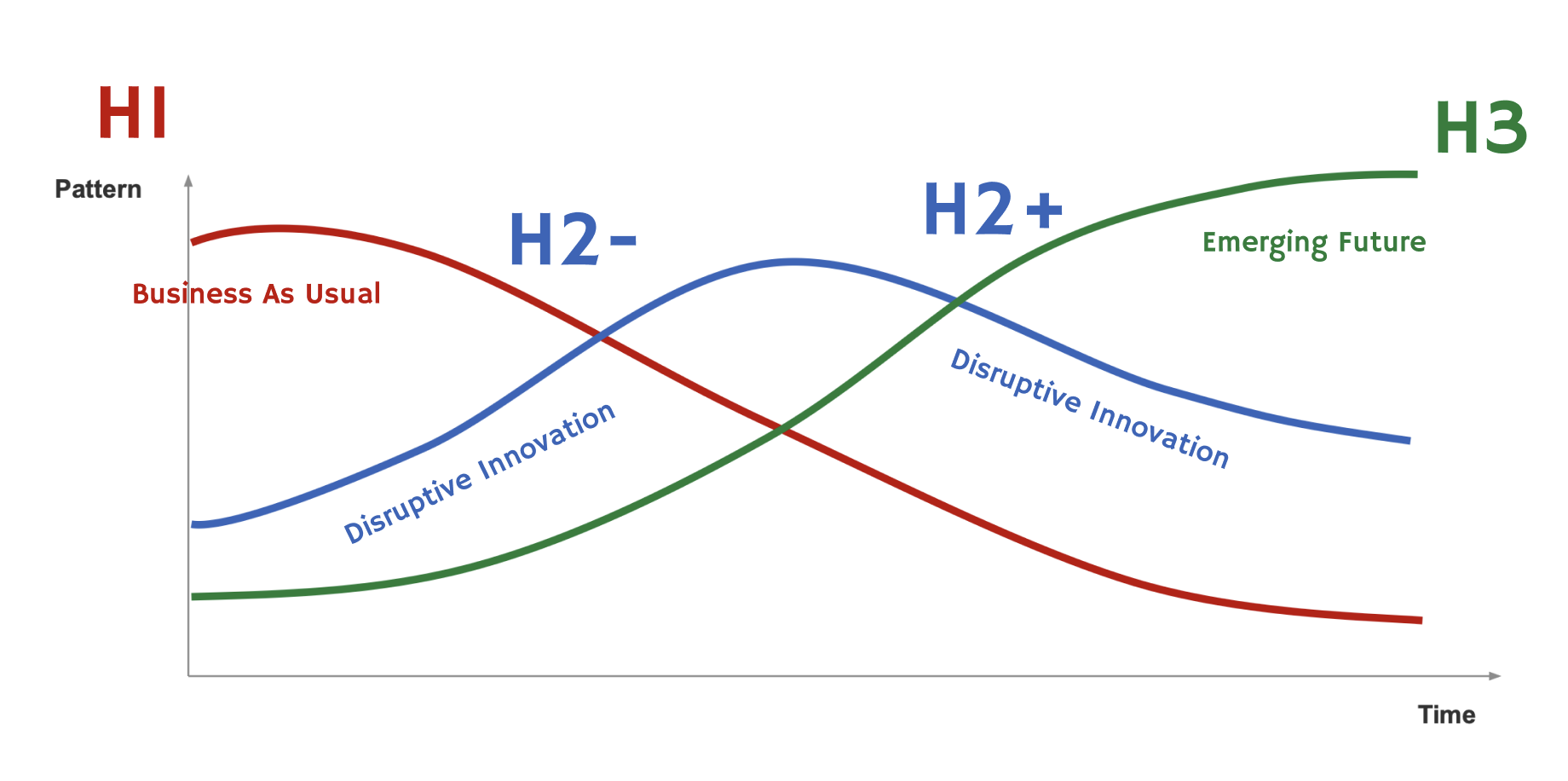

Three Horizons

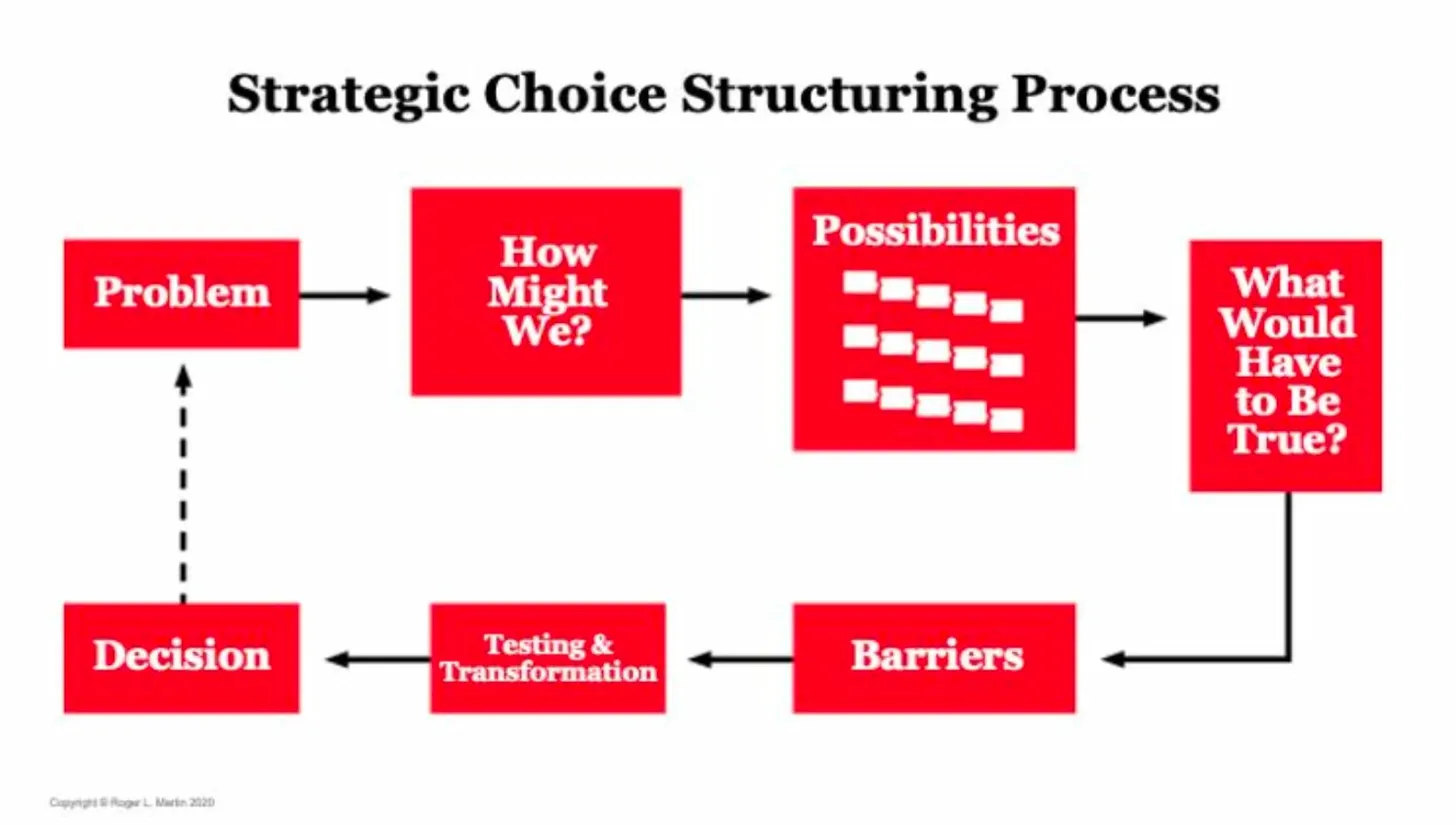

My practice is to establish H1 and H3 before engaging H2. Without those anchors, the middle horizon drifts into optimism or paralysis. Roger Martin's question sharpens the work further: "What would have to be true for this transition to be possible?"

Google Maps Sustainability

Work sits in H2+. H1 = existing eco-certification infrastructure. H3 = a world where sustainable choices are effortless and invisible. The design question only becomes precise once both ends are established.

Referenced · Roger Martin's Strategic Choice Structuring Process

Product Strategy

Where strategic thinking connects directly to what gets built, how it's positioned, and how it enters the market.

Roadmap Prioritization

An impact vs. effort matrix turns team debate into a decision by making judgment criteria explicit — what to build now, what to watch, and what to drop.

Bayer

After framing four opportunity directions from research, a core team session aligned on prioritization and stakeholder buy-in in one room.

Market & Competitive Analysis

I combine Porter's Five Forces with the BCG Strategy Palette to analyze industry power dynamics and strategic environment simultaneously.

Parkonomy

The analysis revealed the competitive moat wasn't in the parking transaction — it was in the data layer. That reframing changed the entire product direction.

Go-to-Market Thinking

GTM is a product decision. Entry point determines which features matter, which partnerships are essential, and which users get prioritized first.

Parkonomy

B2B entry targeting property managers over individual drivers — validated with a letter of intent from one of China's largest real estate firms.